Table of Contents >> Show >> Hide

- Table of contents

- What AI changes (and what it doesn’t)

- Plaid’s lens: platform + data as an AI advantage

- Brex’s lens: AI-native operations and workflow control

- The shared playbook: 7 principles for AI-era product

- Specific examples: fintech-flavored, reality-tested

- Field notes (): common “oh no” moments and fixes

- 1) The first demo is a polite liar

- 2) “Accuracy” is not one number

- 3) Users don’t want a chatbot; they want a shortcut

- 4) Evidence beats eloquence

- 5) Make uncertainty a first-class UI element

- 6) Governance isn’t paperworkit’s safety infrastructure

- 7) AI tools change throughput, which changes coordination

- 8) Human fallback is design, not defeat

- Conclusion

AI didn’t just show up at the product partyit rearranged the furniture, changed the music, and now it’s asking where you keep the metrics dashboard. In fintech, that’s equal parts exciting and terrifying, because “move fast” has always had an asterisk: *and don’t break trust*.

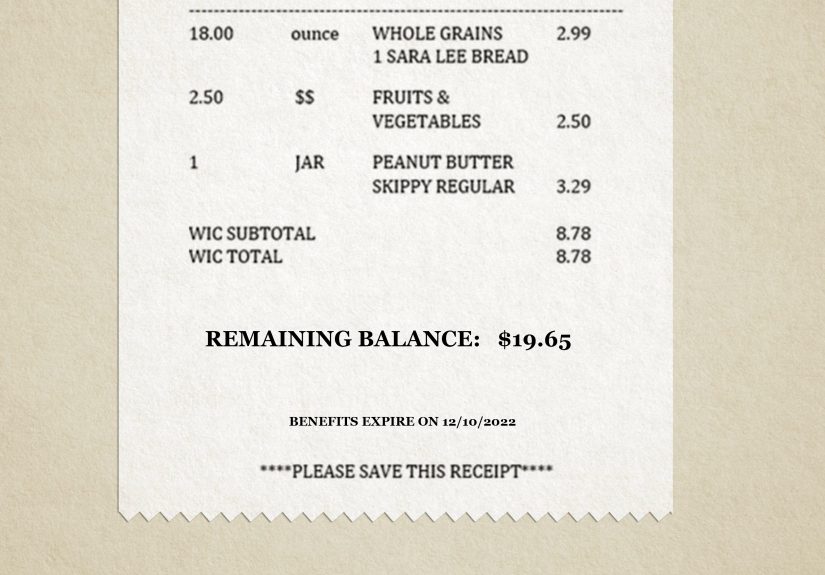

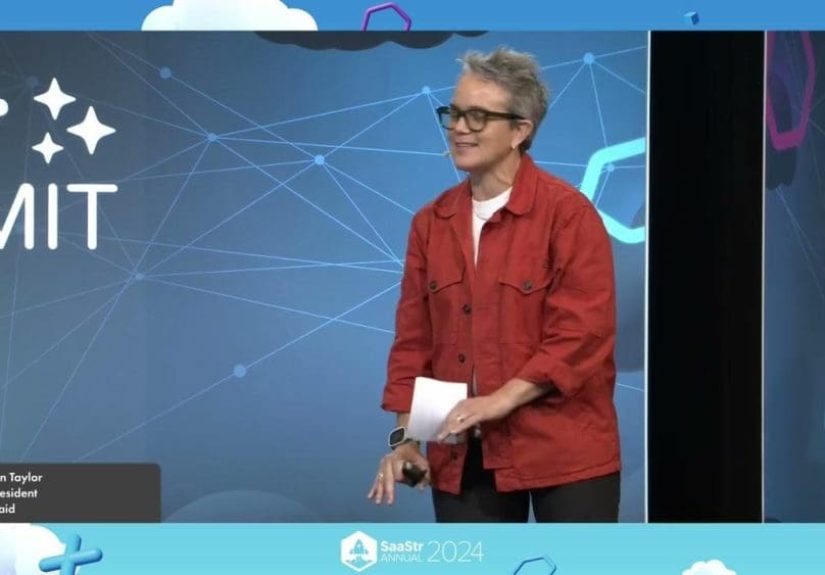

A well-circulated SaaS community session at SaaStr featured two leaders building right at that intersection: Jen Taylor, President of Plaid, and Karandeep (“Karan”) Anand, then President of Brex. Brex has since continued to evolvetoday Ben Gammell holds the President and CFO rolebut the lessons still travel well: build faster with AI, yes, while designing workflows that customers (and auditors) can understand, defend, and rely on.

Below is a practical guide to “building product in the age of AI” through a Plaid-and-Brex lens: platform fundamentals, AI-native operations, and a playbook you can apply even if you don’t have a dedicated ML team and a budget the size of a small moon.

What AI changes (and what it doesn’t)

The job is still: solve a real problem for a real customer

AI lowers the cost of building something. It doesn’t lower the cost of building the right thing. In fact, it can make it easier to ship the wrong thing fasternow with better grammar.

In fintech workflows, the “right thing” usually means three outcomes:

- Less effort: fewer steps, fewer manual checks, fewer copy-paste pilgrimages to spreadsheets.

- Better decisions: clearer anomalies, smarter prioritization, fewer false alarms.

- More trust: explanations, audit trails, and predictable behavior under stress.

Your user is now a duo: human + copilot

Customers increasingly show up with an assistant in the seat next to themasking “why was this flagged?” or “draft the policy-compliant note.” That changes product design. You’re not just shipping a UI for clicks; you’re shipping a system that can:

- Explain itself in plain language,

- Act in safe, reviewable steps (recommend → draft → approve → execute), and

- Fail gracefully when data is missing or uncertainty is high.

Plaid’s lens: platform + data as an AI advantage

Plaid is a platform. Platform product is about making other builders successfulreliably, securely, and at scale. AI doesn’t replace that. It makes it more valuable, because AI features are only as good as the data and permissions beneath them.

1) Data quality is the hidden “model” behind every model

LLMs can write, summarize, and reasonbut they can’t redeem inconsistent inputs. In fintech, the difference between “helpful insight” and “confident nonsense” is often data hygiene: consistent schemas, permissioned access, and clear provenance. A platform that normalizes and secures data connections gives AI features something sturdy to stand on.

2) Internal AI adoption is a competitive moat

Plaid has publicly shared how it approached AI coding adoption across engineering as a deliberate effortchanging habits at scale while maintaining quality. The lesson for product leaders is not “buy a tool.” It’s: operationalize AI usage with enablement, guardrails, and measurable outcomes. When engineering gets faster, product discovery, risk reviews, and launch readiness often become the new bottlenecks. Modern teams adjust the whole pipeline, not just the code stage.

3) Prototype fast, but bring “real world” questions forward

Plaid has also highlighted faster prototyping via modern developer tooling and its APIs. That’s greatuntil the prototype meets production: inconsistent institution behavior, delayed transactions, edge cases, consent boundaries, and fraud attempts. The platform approach is to prototype quickly and start hardening early: define permissions, failure modes, and graceful degradation from day one.

Brex’s lens: AI-native operations and workflow control

Brex sells workflow: spend management, corporate cards, and finance operations. That means the product is inseparable from policy and controls. AI can accelerate those workflowsbut only if it’s built with the same seriousness as the rest of the financial system.

1) AI-native operations: make the whole company faster, not just features

Brex has described an “AI-native operations” strategy that’s refreshingly practical. It includes building AI into customer workflows, building or buying internal automation, and rolling out tools that multiply employee productivity. The product takeaway: your roadmap depends on how quickly support, risk, finance, legal, and engineering can execute together. AI that only speeds up one function creates organizational drag elsewhere.

2) Embed product teams into outcomes

Karandeep Anand has discussed a modern product approach: reduce handoffs, tighten loops with frontline teams, and own outcomesnot just requirements. That’s especially powerful for AI, because AI systems need monitoring, evaluation, and iteration. If product teams aren’t close to real operating signals (fraud trends, escalation reasons, onboarding drop-off), AI features can drift from “helpful” to “hazard.”

3) Explainability is the UX that buys trust

When AI flags an expense or suggests a policy exception, users need evidencenot vibes. Great fintech AI experiences include: the transaction context, the relevant policy clause, the historical baseline, and a recommended next step. The goal is to help a reviewer make a decision faster and feel comfortable defending it later.

The shared playbook: 7 principles for AI-era product

1) Start with workflows, not models

Map the workflow and identify high-friction moments: decisions, investigations, documentation, and handoffs. In fintech, high-leverage zones include expense review, support investigation, onboarding/compliance, fraud/disputes, and cash-flow analysis.

2) Build trust rails before autonomy

AI should recommend fast and execute carefully. Add human checkpoints for high-impact actions, log inputs/outputs, constrain what the system can do, and create a clean fallback path when uncertainty is high.

3) Treat evaluation as a product feature

Create offline test sets (edge cases + red-team prompts), online metrics (time saved, error rate, escalation rate), and recurring human quality reviews. If you can’t measure quality, you can’t responsibly expand scope.

4) Design “review, edit, approve” loops

Most durable AI value is drafting and suggesting. Make outputs editable, show evidence, and make it easy to escalate to a human process without forcing users to start over.

5) Make privacy and permissions visible

In finance workflows, consent boundaries aren’t a footnotethey’re the product. Make data access explicit, minimize what models see, and avoid “silent” sharing between contexts.

6) Ship in thin slices, then harden

Start narrow: one category of tickets, one policy rule, one segment. Prove reliability and ROI, then expand. Fintech rewards compounding, not fireworks.

7) Build AI literacy into the org

Plaid’s focus on AI-enabled engineering and Brex’s focus on AI-native operations point to one truth: AI is an organizational capability. Product, security, risk, legal, and support need shared standards and a repeatable way to ship safely.

Specific examples: fintech-flavored, reality-tested

Example A: “Why was this flagged?” explanations for spend review

What you build: For each flagged expense, generate a short explanation with evidence (policy clause, merchant category, amount vs. user baseline, missing receipt). Add an editable draft note the reviewer can send.

Guardrails: Never auto-deny; require a human click. Log evidence used. Provide a clear “mark as expected” feedback action to reduce future noise.

Example B: AI-assisted onboarding checklists

What you build: A guided checklist that reads submitted documents, identifies missing fields, and drafts plain-English requests. “Your EIN letter is missing page 2” beats “REJECTED,” every time.

Guardrails: Show what was extracted; let users correct it; mask sensitive data in previews.

Example C: AI-accelerated engineering with quality controls

What you build: Pair AI coding assistance with stronger automated testing and review. Generate tests alongside code, summarize diffs for reviewers, and enforce baselines (coverage, lint, security checks). Faster output only helps if the error rate doesn’t also get a performance review.

Field notes (): common “oh no” moments and fixes

These are patterns teams repeatedly encounter when they move from “AI demo” to “AI product.” They’re written as generalized lessons drawn from public best practices and the reality of shipping AI into high-trust workflowsso you can learn them without needing to personally collect a museum of production incidents.

1) The first demo is a polite liar

Prototypes look brilliant because you feed them clean inputs and ask friendly questions. Production is less polite: messy transactions, missing context, users who type like they’re speedrunning a CAPTCHA. Fix: build a “top 50 ugly cases” test set early and run it every week.

2) “Accuracy” is not one number

Drafting a message, extracting fields, flagging anomalies, and explaining decisions are different tasks with different risk. Define acceptance criteria per task. A model can be great at summarization and still terrible at policy enforcement. That’s not hypocrisy; it’s math.

3) Users don’t want a chatbot; they want a shortcut

Chat is great for exploration, but finance teams want outcomes: “Draft the receipt request,” “Explain the variance,” “List missing onboarding items.” Build action-oriented UI, not an existential conversation about expense categories.

4) Evidence beats eloquence

LLMs can write beautiful nonsense. In fintech, beautiful is suspicious. Anchor outputs to evidence: show the transaction, the policy clause, the precedent. When evidence is missing, the system should say so and route to review.

5) Make uncertainty a first-class UI element

People trust systems that admit uncertainty more than systems that bluff. Use “needs review” states, confidence cues, and one-click escalation. Your model should have permission to say “I’m not sure,” because your business does not have permission to be wrong quietly.

6) Governance isn’t paperworkit’s safety infrastructure

Decide who owns prompts, policies, and evaluation. Log model versions. Review changes like you review risk controls. In regulated environments, you may need to explain not only what the system did, but why you believed it was safe to let it do that.

7) AI tools change throughput, which changes coordination

When engineers and operators move faster, the constraint shifts to alignment and review queues. Fix: shorten planning cycles, clarify ownership, and make risk reviews fast and consistent. Otherwise you build the world’s fastest engine and then park it behind a slow committee.

8) Human fallback is design, not defeat

Great AI products know when to hand off. Build graceful fallbacks that preserve context and drafts so users don’t repeat themselves. A good handoff feels like a relay race; a bad one feels like “start over and regret everything.”

The takeaway: Plaid’s platform discipline plus Brex’s operations discipline equals a durable AI strategy: high-quality data, workflow-first design, measurable evaluation, and trust rails that scale. Do that, and you can ship faster and sleep betterwhich is the KPI nobody puts in the OKR doc, but everyone secretly wants.

Conclusion

Building product in the age of AI isn’t about chasing the newest model every week. It’s about building durable systems: clean data, thoughtful workflows, repeatable evaluation, and guardrails that earn trust. The presidents of Plaid and Brex represent complementary strengthsplatform reliability and operational leverageand AI rewards teams that build both muscles.

Start narrow, measure honestly, harden relentlessly, and keep the human in the loop where the stakes demand it. Your customers may not throw you a parade. But they might renew. And in B2B, that’s basically confetti.