Table of Contents >> Show >> Hide

- What California finalized (and when it matters)

- FEHA in one paragraph (so we’re speaking the same language)

- What counts as an “automated-decision system” under the regulations?

- The heart of the rules: you can’t automate discrimination

- Disparate impact is the “quiet” risk that gets loud later

- Accommodation and accessibility: the rules get very practical

- Bias testing and “show your work” compliance

- Recordkeeping: the “paperwork” that becomes your shield

- Vendor liability and the “agent” problem

- Specific examples: how risk shows up in everyday workflows

- A no-panic compliance checklist (the one you’ll actually use)

- What the regulations do not mean

- Experience section: what this looks like in real organizations ()

- Bottom line

Informational only, not legal advice.

California just did what California does best: it looked at a fast-moving technology trend, sighed deeply,

and then wrote rules to make sure nobody gets unfairly steamrolled by it. This time, the spotlight is on

artificial intelligence and “automated-decision systems” used in hiring and other employment decisions under

the Fair Employment and Housing Act (FEHA).

If your company uses software that screens résumés, targets job ads, ranks applicants, scores video interviews,

or runs “fun” little game-based assessments (that somehow feel like a midterm exam from a class you never took),

you’re in the zone these regulations are trying to clarify. The goal isn’t to ban technology. It’s to make sure

the same old anti-discrimination rules still apply when the decision-maker wears a hoodie and runs on code.

What California finalized (and when it matters)

The California Civil Rights Council secured final approval for regulations designed to protect against potential

employment discrimination tied to AI, algorithms, and other automated-decision systems. The rules are set to take

effect on October 1, 2025. That date matters because it turns “we should probably clean this up”

into “we should have already cleaned this up.”

The big concept is simple: FEHA’s protections don’t disappear just because you outsourced part of the decision

to an automated tool. If an automated system screens people out based on protected traitsor uses a proxy that

causes the same outcomeyour organization can still be responsible.

FEHA in one paragraph (so we’re speaking the same language)

FEHA is California’s core employment anti-discrimination law. It covers hiring, firing, promotion, pay, training,

and other “terms, conditions, and privileges” of employment. It also includes requirements around reasonable

accommodation (especially for disability and religion) and prohibits retaliation. The regulations don’t reinvent

FEHA; they modernize how existing principles apply when employers use automated tools.

What counts as an “automated-decision system” under the regulations?

Think of an automated-decision system (ADS) as a computational process that makes decisions or

helps humans make decisions about employment benefitsthings like hiring, promotion, selection for training, and

similar opportunities.

Common ADS examples in real workplaces

- Résumé screeners that filter applicants based on keywords, degree requirements, or prior titles.

- Ad delivery tools that target job ads to specific groups (even if the targeting wasn’t “intentional”).

- Game-based or puzzle assessments that claim to measure traits like “grit,” “focus,” or “learning agility.”

- Video or audio interview analytics scoring tone of voice, facial expression, or other behaviors.

- Ranking and scheduling filters that prioritize candidates based on availability patterns.

What an ADS usually is not

Normal productivity tools (like word processors, spreadsheets, calculators, and basic IT software) typically aren’t

the targetunless they’re actually making or facilitating employment decisions. In other words: a spreadsheet

isn’t scary. A spreadsheet that automatically “red flags” applicants for rejection based on a scoring formula might be.

The heart of the rules: you can’t automate discrimination

The regulations clarify that it is unlawful for an employer (or other covered entity) to use an ADS or selection

criteria that discriminates against applicants or employees on a basis protected by FEHA.

“Selection criteria” includes more than you think

It’s not just the final “Hire / No Hire” output. The regulations treat selection criteria broadly, including

qualification standards, employment tests, and proxies. Proxies are the classic “we didn’t ask for

protected status, we just asked for something that correlates with it” problem.

Example: If a tool heavily rewards uninterrupted job history, it may disproportionately disadvantage caregivers

or people with certain medical histories. If a tool uses zip code as a “culture fit” signal, it can behave like a

proxy for race or national origin in ways that raise serious risk.

Disparate impact is the “quiet” risk that gets loud later

A tool can be “neutral” on its face and still create a disparate impactmeaning it disproportionately screens out

members of a protected group. Under long-standing selection procedure principles, an employer may need to show

the practice is job-related and consistent with business necessity (and that less discriminatory alternatives

aren’t available).

Translation: “The algorithm did it” is not a defense. It’s a sentence that often ends with “…so we ran a validity

study, evaluated impact, documented our decisions, and built reasonable accommodations into the process.”

Accommodation and accessibility: the rules get very practical

One of the most concrete points in the regulations is the reminder that automated systems can create barriers for

people with disabilities (and sometimes for religious scheduling needs).

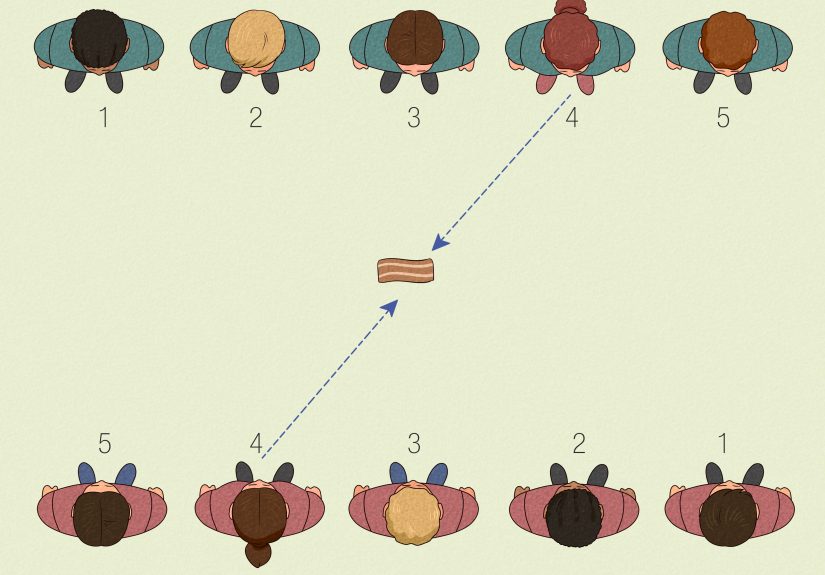

Schedule-based screening can create unlawful adverse impact

The regulations specifically call out online application technology that limits, screens out, ranks, or prioritizes

applicants based on their schedule. If that practice has a disparate impact on religion, disability, or medical

condition, it’s unlawful unless it’s job-related, consistent with business necessity, and includes a mechanism for

the applicant to request an accommodation.

“Assessment” tools can unintentionally test the wrong thing

Game-like assessments or timed tests can disadvantage certain disabilities (reaction time, dexterity, sensory processing).

Video interview analysis can create risk if it evaluates tone, facial expression, or other physical characteristics

in ways that don’t map to actual job performance.

If your hiring funnel includes an automated step, your accommodation process can’t be “email us after you’re rejected.”

The accommodation pathway needs to exist during the assessment so applicants can participate meaningfully.

Bias testing and “show your work” compliance

A major theme in the final regulations is that anti-bias testing and other proactive efforts matter.

The text signals that in discrimination claims (and in available defenses), decision-makers may consider evidenceor

the lack of evidenceof anti-bias testing, including the quality, efficacy, recency, and scope of the effort, and what

the employer did in response to the results.

That doesn’t necessarily mean every employer needs an expensive third-party audit on day one. But it does mean that

“we never checked” is likely to look worse over timeespecially when the tool is doing high-impact screening at scale.

What “reasonable” testing can look like

- Pre-deployment evaluation: Test the tool on representative data, check for group differences, and validate job-relatedness.

- Ongoing monitoring: Re-check impact as jobs, applicant pools, and models change.

- Vendor challenge questions: Ask what data trained the system, what fairness methods were used, and what limitations are known.

- Human review triggers: Define when a human must override or re-check the automated output.

Recordkeeping: the “paperwork” that becomes your shield

Employment compliance loves recordkeeping because it answers the eternal question: “Can you prove it?”

The regulations reinforce retention requirements and explicitly pull automated-decision system data into the recordkeeping universe.

Practically, that means employers should be prepared to preserve not only traditional hiring records (applications,

interview notes, test results), but also relevant ADS-related dataespecially if a complaint is filed or an investigation starts.

Vendor liability and the “agent” problem

Many employers don’t build these tools. They buy them. But FEHA doesn’t disappear in a puff of procurement smoke.

The regulations clarify that an employer’s “agents” can include people (and companies) acting on behalf of the employer,

including when decisions are conducted in whole or in part through an ADS.

This matters because a vendor can perform employment-adjacent functions that look a lot like an “employment agency” role:

sorting applicants, filtering who gets seen, and shaping who gets hired. Even when a vendor argues “we don’t make the final decision,”

the system may still be doing substantial gatekeeping.

What to put in vendor contracts (the practical version)

- Transparency: Require documentation on how the tool works at a meaningful level (inputs, outputs, weighting categories).

- Testing support: Require cooperation for bias testing and impact analysis (including access to relevant logs and metrics).

- Change management: Require notice before model updates or feature changes that affect decision logic.

- Accommodation readiness: Confirm how the tool supports alternative formats, timing modifications, and accessibility needs.

- Indemnity and responsibility: Align liability terms with real-world risk (and avoid “it’s your fault” boilerplate).

Specific examples: how risk shows up in everyday workflows

Example 1: The résumé screener that “loves leadership”

A company deploys a résumé tool that prioritizes candidates with certain job titles and schools because “that’s what top performers had.”

If the historical workforce was not diverse, the model may learn patterns that replicate old inequities. The fix is not “turn off AI.”

The fix is to validate job-relatedness, evaluate impact across protected groups, remove proxy signals, and monitor outcomes.

Example 2: The availability filter that quietly penalizes religion or disability

An application system ranks candidates higher if they are available weekends and evenings. If it screens out applicants who cannot work

certain hours due to religious observance or a medical condition, the employer may need to show business necessity and provide an accommodation mechanism.

Example 3: The video interview tool that thinks eye contact equals competence

Some tools claim to measure “engagement” using facial expression and vocal patterns. But eye contact, tone, and expression vary widely across

disability, culture, and neurodiversityand may have little to do with job performance. If the tool drives decisions, risk increases fast.

A safer approach uses structured interviews with job-related scoring and avoids pseudo-scientific inferences.

A no-panic compliance checklist (the one you’ll actually use)

- Inventory tools: List every automated system that screens, ranks, recommends, or scores people for employment benefits.

- Map decisions: Identify where automation affects outcomes (and where a human can realistically override it).

- Define selection criteria: Document what the tool measures and why it matters for the job.

- Run impact checks: Evaluate whether outcomes differ substantially across protected groups; repeat regularly.

- Build accommodations: Provide a clear way to request disability/religious accommodations during the process.

- Control vendor drift: Track updates, model changes, and new features; require notice and documentation.

- Train recruiters and managers: Teach what the tool can’t do (and what humans must still do).

- Keep records: Retain relevant hiring and ADS data, especially when decisions are challenged.

- Create an escalation path: If applicants report problems, know who investigates and how quickly you can respond.

What the regulations do not mean

- They do not ban AI. They reinforce anti-discrimination and accommodation obligations in an AI-shaped world.

- They do not magically make vendors responsible for everything. Employers still need governance and oversight.

- They do not reward ignorance. “We never looked for bias” is not a strategy; it’s a future exhibit.

Experience section: what this looks like in real organizations ()

If you want to understand how these rules land inside companies, picture a conference room (or a video call) where HR, legal,

IT, and a hiring manager all agree on one thing: “We use… what do we use again?” That’s usually the starting line. The first

“experience” most teams have is discovering how many automated steps exist in their hiring pipelineoften added over time to

speed up recruiting, reduce workload, or “improve quality,” without a clear owner for compliance oversight.

In one common scenario, a talent acquisition team swears they only use automation for “scheduling.” Then someone points out

the scheduling tool automatically prioritizes candidates who can interview within 48 hours. That seems harmlessuntil the team

realizes it may disadvantage applicants with caregiving duties, certain disabilities, or religious schedules. The moment a tool

starts ranking people, it’s no longer just “calendar help.” The experience here is humbling: convenience features quietly become

gatekeepers.

Another recurring story: the “assessment” that was purchased because it looked modern, fun, and allegedly predictive. A vendor demo

shows colorful dashboards and confidence scores. Everyone nods. Then an applicant requests an accommodation because the timed portion

is inaccessible. Suddenly the team must answer questions nobody asked during procurement: Can the timer be adjusted? Is there an

alternative format? Does the system log the accommodation so the applicant isn’t penalized for it? Organizations often learn the hard

way that accommodations aren’t just a legal conceptthey’re an operational requirement. If there’s no path to accommodate inside the tool,

the tool is not “plug and play.” It’s “plug and pray.”

The best-run teams build a routine that feels less like panic and more like product management. They create an “ADS intake form” for any

tool that influences employment decisions: what it does, what it measures, what data it uses, what the vendor promises, and what the company

will verify. They schedule periodic bias checks (even simple ones) and track changes over time. The experience becomes iterative: deploy,

measure, adjust, document, repeat.

Finally, there’s the human side: recruiters and managers who believe the tool is “objective” because it’s automated. The strongest teams

train people to treat automated output as information, not truth. They teach hiring managers to ask: “What is the tool actually

measuring, and is that job-related?” They also teach the most underrated skill in compliance: curiosity. Curiosity is what turns “the system

rejected them” into “why did the system reject them, and does that reason make sense for this job?”

In practice, these regulations push employers toward a healthier workplace habit: don’t outsource your judgment. Use tools, but keep your

accountability. And if your algorithm can’t explain itself, it might be time to stop letting it make decisions about humans.

Bottom line

California’s finalized FEHA regulations are a clear signal: automated hiring and employment tools must operate within the same civil rights

guardrails as any other selection method. The smartest move isn’t to abandon technologyit’s to govern it: test it, document it, accommodate

people affected by it, and make sure humans stay responsible for human outcomes.